April 2026

The Undesigned Middle

Most organizations are deploying AI. Far fewer are building anything that earns trust.

Anuradha Sachdev built one of the first teams to design and deploy agentic AI in customer service at enterprise scale.

IN BRIEF

Fewer than 6% of organizations report meaningful financial impact from their AI investments, despite near-universal adoption.¹ This is not a technology problem. It is a leadership problem. The organizations that get it right grow revenue 41% faster and profit 49% faster than those that do not.² This piece is about what they do differently.

One of the world's largest logistics companies had a clear mandate: move from a volume-driven shipping operation to a data-driven, intelligent network. On one side, teams were losing customers and scrambling to restore the basics — on-time delivery, fast resolution, the fundamental reliability that trust is built on. On the other, an AI transformation agenda was gathering momentum — new platforms, new capabilities, a new operating model.

Both efforts were real. Both were urgent. Neither had a clear line to the other.

That space between them — where a CEO's transformation vision meets the customer who just needs their shipment to arrive — was largely undesigned.

The gap between intent and impact is no longer a design problem. It is a structural one. Call it The Undesigned Middle.

THE DIAGNOSIS

The story is not unusual. It is the pattern.

The gap between transformation agenda and customer reality — and what it actually takes to close it.

Two years ago, Accenture Song asked me to build a customer service experience practice from scratch, designing and deploying AI for real clients, at scale, before most organizations had moved from debate to delivery.

Across every engagement, it started the same way. A narrow ask. And underneath it, every time, the same gap.

We were brought in through a narrow door, a request to redesign key transactions on the organization's website. What we found was something the brief had no language for. Two efforts running in parallel, one fighting to restore the basics, one building toward the future, each with its own leadership, its own roadmap, its own definition of success. The connection between them was loose.

What became obvious, quickly, was that the request itself was the wrong unit of work. The pressure to keep moving made it nearly impossible for the organization to stop and ask whether all the pieces were adding up to something coherent. Every team was planting trees. Nobody had been asked to tend the forest.

The reframe changed that. The team that brought us in had a specific mandate: fix the website, reduce friction, restore the basics. The team running the AI transformation had an entirely different one: reimagine how the company operates at scale. Two mandates, two roadmaps, no connection between them. The moment we zoomed out, that changed. The experience work and the AI work were suddenly pointed at the same thing. And something else became clear: most organizations build the same experience three times, once for enterprise, once for end customers, once for small business owners, because nobody stopped to design it as a system. Design it once, and it works across every channel, the website, the app, the phone, whichever one the customer reaches for in that moment.

The organizations that get this right discover that reducing cost and improving experience are not a tradeoff. AI done right delivers both. The ones that treat it as a tradeoff end up with systems that are fast, capable, and quietly misaligned with the people they were built to serve.

Every project begins with a reframe, the moment we understood what was actually being asked, and why the answer to the wrong question would not be enough.

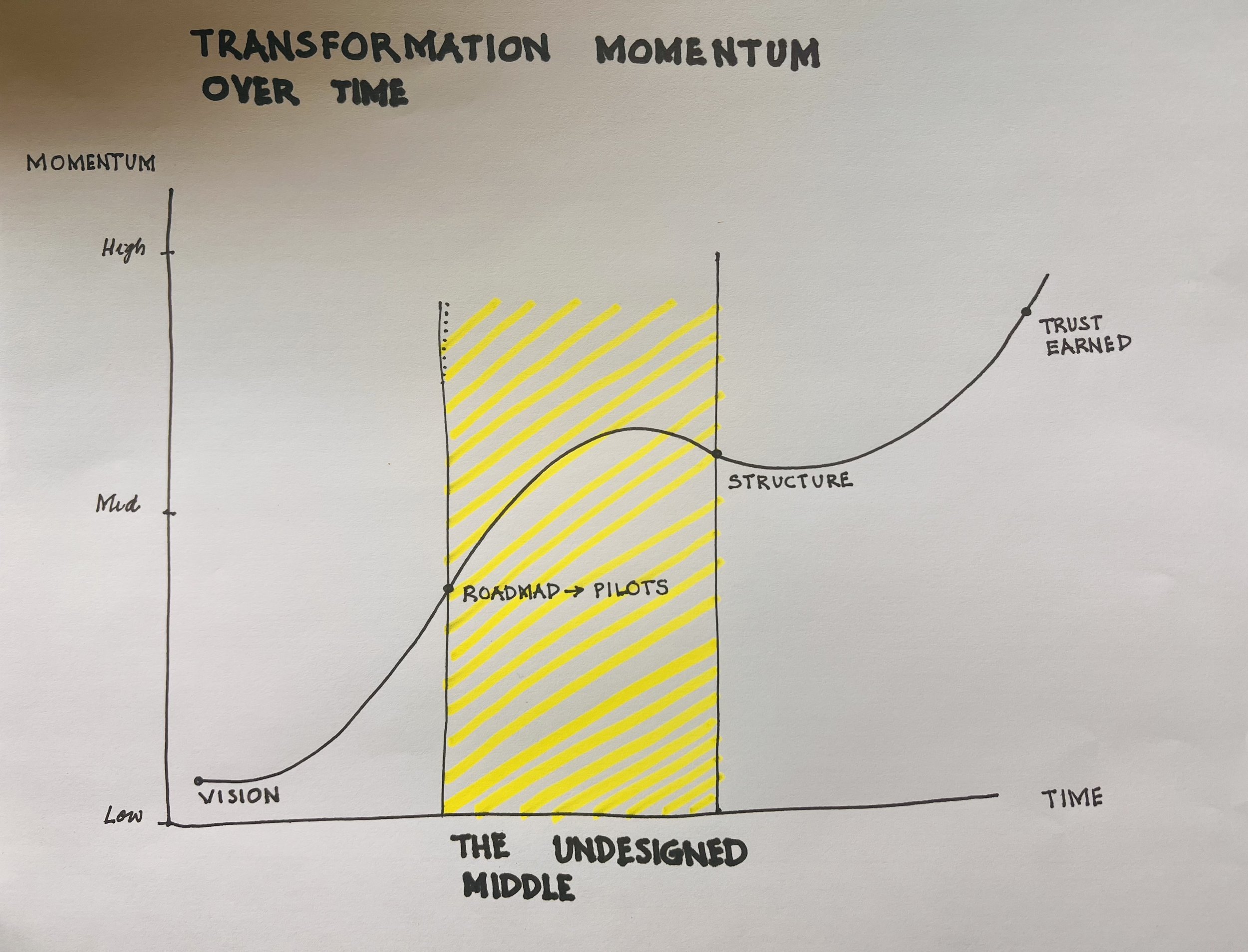

Every transformation has a moment where vision has been declared and structure hasn't arrived yet. That gap between roadmap and proof is where most initiatives quietly lose momentum.

It's also where the real design work lives.

BEFORE THE STRUCTURE

Four questions. Answer them before you build.

The questions most organizations skip — and why skipping them is no longer an option.

I have seen what happens when these questions go unasked — the purpose question answered too late, the wrong person centered in the room, success focused on narrow cost savings alone. These are not new questions. They predate AI, predate digital transformation, predate most of the platforms being built today. Every good product, every service worth trusting, was built by someone who answered four questions before they built anything. The discipline separates work that endures from work that merely ships. AI does not change these questions. It makes skipping them unforgivable.

WHY

The purpose test. It sounds simple until you sit in a room where the answer is "reduce cost" dressed up as "improve the customer experience." The systems built from each intention feel entirely different to the people who use them. Purpose is not a mission statement. It is the decision that everything else follows from. Without it, capability fills the vacuum — and at scale, that produces consequences no dashboard was designed to catch.

WHO

Whose needs are you actually designing for? This is not a demographic question. It is a power question. Whose needs are centered in the room where decisions are made — and whose are assumed, approximated, or simply absent? The answer is almost always the person who can least afford for the system to get it wrong. Naming them is an act of organizational courage — because it means choosing whose experience takes priority. The harder truth is that naming them does not guarantee they are prioritized. The deciding factor is often the person the business can least afford to lose — and those two are not always the same person.

WHAT MATTERS

How will you know if it's working — before you launch? A system built without agreed measures of human and business impact is not an experiment — it is a guess with consequences. But here is the harder truth: the right measures are not just better efficiency metrics. A call center that tracks response time, handle time, and agent tone — while the person on the other end still has no resolution — is not measuring service. It is measuring activity. The discipline is not finding better proxies. It is deciding, before you build, that the person's actual outcome matters as much as the numbers that are easier to count. Most organizations can tell you their efficiency metrics. Fewer can tell you whether anyone trusts them more.

DO NO HARM

This is not a legal obligation, though it is that too. It is a professional ethic — and one that is easier to declare than to practice. I helped build brain atlas software at LONI, the Laboratory of Neuro Imaging. The atlases we worked from were built predominantly from Western populations. The gaps were not hypothetical. The system acts on what was documented. It has no way of knowing what wasn't. Every leader building AI systems today is making the same kind of decision — about whose experience is in the data and whose is assumed. The question is not whether gaps exist. It is whether anyone stopped to ask before the system shipped.

These four questions are the precondition. And in most enterprise AI transformations today, they are the work that most organizations need to do.

THE PATH AHEAD

The structure that turns intent into reality

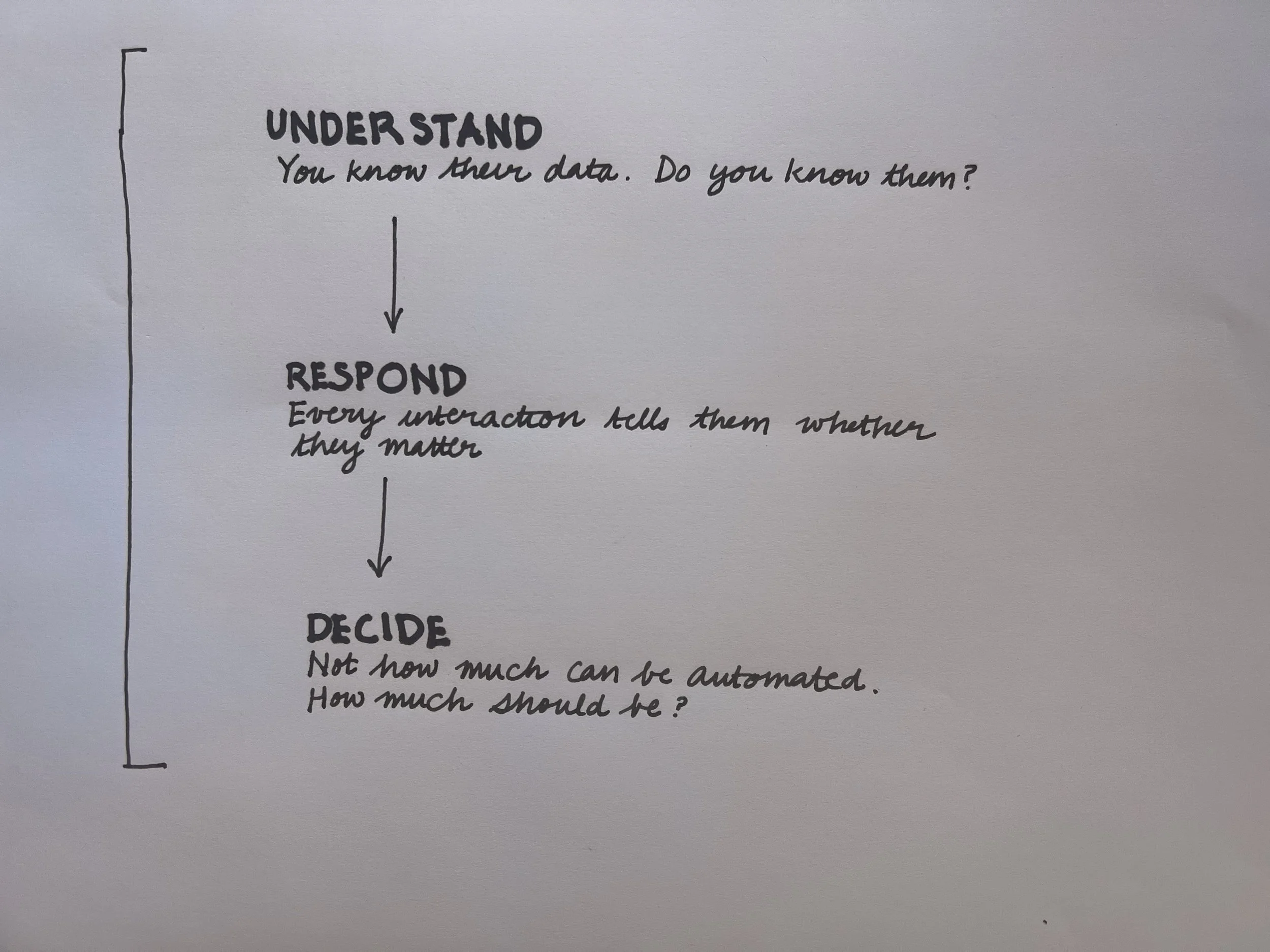

Understand who you are building for. Respond across every moment. Decide where human judgment must lead.

1. UNDERSTAND

Most enterprise AI systems know a great deal about their users. What they rarely know is why. Not the inferred why of a recommendation engine — the human why. The context a person carries into an interaction that no data point captures.

| Do you actually know these people — or do you know their data?

In one engagement, redesigning how a global professional services firm listened to its most significant client relationships, we started with fifty-five direct conversations with C-suite leaders and the teams who worked closest with them — on both sides of the relationship. What emerged was not a set of satisfaction scores. It was a map of the moments that mattered most and were least understood — where a client needed a partner to see around corners and instead received a process, where the relationship was strong on paper and fragile in practice, where the work being done was never connected to the outcome the client actually cared about.

2. RESPOND

Understanding who the system is being built for is necessary. It is not sufficient. The harder problem is what the system does with that understanding — across every channel, every moment, every human state a person might arrive in.

| The system either understands you — or it doesn't. Every interaction is a signal about whether you are valued.

In another engagement, customers were making significant purchases — and then, when the delivery date arrived, hearing nothing. As one customer put it: we make the big purchase, and when the delivery date rolls around, silence. That silence is not a notification failure. It is a trust failure. The app changed that — real-time visibility, proactive alerts, options when things went wrong, and a public commitment to on-time performance measured against stated goals. The hard part was not the technology. It was the organizational courage to make the commitment visible.

3. DECIDE

The most consequential design decision in any AI system is not what it does. It is what it does not do — and who is responsible when it gets that wrong. Every system that touches a human life reaches moments where the stakes are too high for automation to be the right answer. They are the moments that determine whether trust is built or broken.

| Not how much can be automated. How much should be. That question belongs to humans.

When we redesigned the unified portal for the State of New Mexico Human Services Department — used by more than half the state's population — the stakes were not abstract. Residents navigated four separate division offices to access benefits, recertifying repeatedly to avoid losing them. Then one woman told us she could not go to the child support office. Doing so would reveal her location to an abusive partner. The system had no way to know that. A workflow could not decide that. A person had to.

The Understand / Respond / Decide framework — three questions that sound simple until you try to answer them honestly.

THE IMPERATIVE

The organizations that will matter will do this work right.

The work that determines whether everything else holds together.

AI is moving faster than most organizations can design for. The gap between what is being built and what people actually experience is not closing — it is widening. Every quarter that passes without naming and closing the Undesigned Middle is a quarter of customer trust eroded — quietly, at scale. And customers are not the only ones paying the cost. Every employee at the front line is absorbing friction that the system was never designed to resolve — carrying the gap between what AI promised and what it actually delivered.

The organizations that will get this right are not the ones moving fastest. They are the ones willing to stop — and ask the right questions before they build. And the ones that do will not just build better products. They will build better businesses. The evidence is consistent. Organizations that lead on customer experience grow revenue 41% faster and profit 49% faster than those that do not.2 McKinsey's 2023 research found that experience-led organizations achieve cross-sell rates 15–25% higher and share of wallet 5–10% greater than their peers.3 Cost-driven transformation produces finite gains. Experience-led transformation compounds.

The Undesigned Middle is not a gap that closes itself. It is structural. It is human. And it is, in the end, the only work that compounds — into retention, into revenue, and into a competitive position no technology investment alone can build.

McKinsey & Company, The State of AI 2025: Agents, Innovation, and Transformation, November 2025. Of 1,993 survey respondents across 105 nations, only 5.5% reported that more than 5% of their organization's EBIT and "significant value" were attributable to AI.

2. Forrester Research, 2024 US Customer Experience Index, June 2024. CX leaders vs. CX laggards across revenue growth, profit growth, and customer retention.3. McKinsey & Company, “Experience-led growth: A new way to create value,” March 2023.

* Client organizations referenced in this piece have been anonymized at their request. Case details have been reviewed for accuracy.

Anuradha Sachdev built and led the North America customer service experience practice at Accenture Song — one of the first teams to design and deploy agentic AI in customer service at enterprise scale. She writes about what that work revealed.