April 2026Designing the Undesigned Middle

The problem is no longer what systems can do. It is how they behave when capability runs out.

IN BRIEFMost AI systems are built around what they can do. Fewer are built around how they behave when capability is not enough — in the moments of ambiguity, incomplete context, and real human stakes that define how people actually experience them. This piece works through five design constraints for building systems that hold up under those conditions. Each one comes with the questions to test it against the work already in progress. The goal is not a better framework. It is a better decision — before something ships that shouldn't.

A CEO of a telehealth company — where getting it wrong means a patient doesn't get the care they need — asked a question after reading about The Undesigned Middle: how do you measure the impact on trust before you launch?

Most organizations can't answer that. This piece is about why — and what to do instead.

The issue is rarely effort or intent. Smart people are working hard on this. What is often missing is the structure beneath the work — the questions that should be asked before anything is built, the measures that should be agreed before anything is launched. That gap is not a technology problem. It is a design problem. And it starts with behavior — how the system acts when automation is not enough.

The principles that follow are not new. Good organizations have always applied them. What AI changes is what it costs to skip them.

BEFORE YOU BEGINHow to use this

Not for a strategy offsite. For the room where the real decisions get made.

THREE MODESIn a product or ops review: Pick two or three principles. Go deep, not broad. The goal is not to score the system — it is to find the one place where the standard is not being met. These questions work in both directions: before you build, to shape what gets built right; and after, to find where it broke down. The product review is where both happen.

In a working session around a specific breakdown: Take one failure. Run all five against it. The fail signal that appears most often is where to start.

In a leadership discussion: Focus on three questions: where does automation stop? Who owns the outcome? What are we actually measuring? If those three cannot be answered clearly, the system is not yet designed.

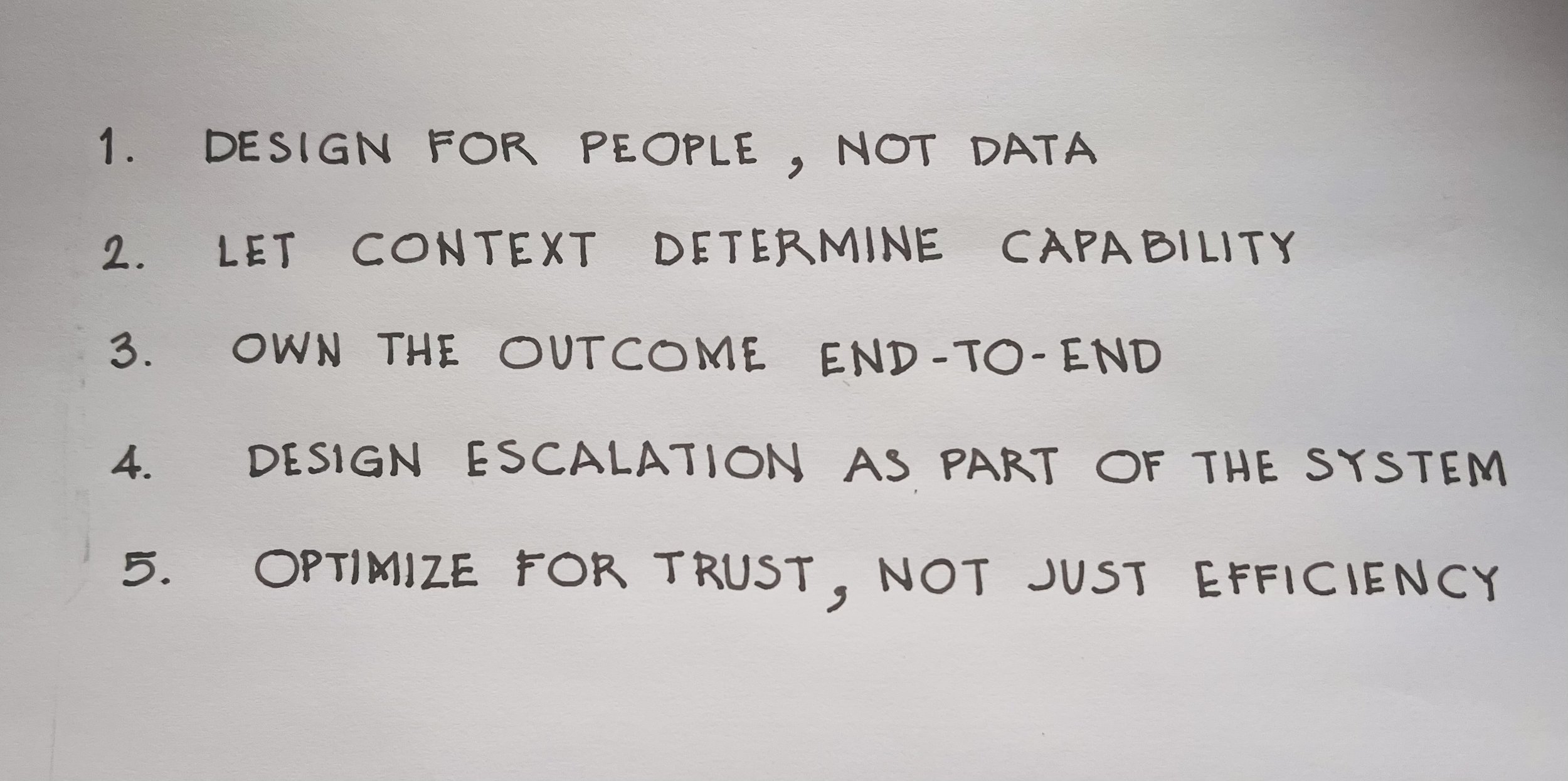

THE DIAGNOSISThe five principles

Not principles to agree with. Standards to test against.

PRINCIPLE 1Design for the person, not the data

The system has the data. What it rarely knows is what the person is actually dealing with right now.

A small business owner applying for a loan after a difficult year is more than their credit score. The context — a single bad quarter, a business that has recovered — is not in the data. That gap is what the system cannot see. And it changes everything about the right response.

The discipline is not adding more capability. It is designing a system that knows when it might be wrong — and makes room for the person to say so.

WHAT THIS TESTS: Are we mistaking data for understanding?

Is there context the person has that the system is acting as if it doesn't need?

Can we name one assumption the system is making that could be wrong?

Can the person correct or override what the system assumes — and if not, why not?

Are we acting with confidence where uncertainty exists?

FAIL SIGNAL: The system proceeds as if it fully understands.

PRINCIPLE 2Read the situation before acting

Not everything that can be automated should be. Automation is effective where context is stable and the cost of being wrong is low. It breaks down where context is ambiguous, stakes are high, or impact is uneven.

A patient discharge system that automatically sends post-surgery care instructions by SMS is appropriate in most cases. It is wrong when the patient lives alone, has no reliable internet access, or doesn't speak the language the instructions were written in. The automation isn't the problem. The absence of a defined limit is. Most systems discover where automation breaks down through failure — after the person has already paid the cost.

The discipline is defining where automation should adapt, pause, or stop — before the system reveals those limits on its own.

WHAT THIS TESTS: Are we responding to the situation — or just following the script?

Have we defined where the system should adapt or stop — or are we waiting for failure to define it for us?

Can we identify where context becomes incomplete or ambiguous in this system?

Can we name where the cost of being wrong increases — and have we designed for it?

Should the system continue, adapt, or stop at this point?

FAIL SIGNAL: The system continues because it can, not because it should.

PRINCIPLE 3Own the outcome, not the completion

Most systems are designed to complete a process. They are not designed to resolve a need.

A benefits recertification system marks the case complete the moment the form is submitted. The person doesn't know if the submission was received correctly, whether anything is missing, who is reviewing it, or when they will hear back. The process moved forward. The person is still waiting — often on a benefit they cannot afford to lose. That information exists somewhere in the organization. It just wasn't designed to reach them.

The discipline is extending responsibility beyond the process — until the need is actually resolved. Not "submitted." Not "processing." Clarity on what happens next, who owns it, and when it will be done.

WHAT THIS TESTS: Does responsibility persist — or disappear at the handoff?

Who owns this outcome until it is resolved?

Can we name the exact point where ownership breaks?

Does the person know what happens next — and who is responsible?

Are we marking completion before resolution?

FAIL SIGNAL: "Submitted" or "completed" without a clear owner or outcome.

PRINCIPLE 4Define where automation ends

Every system reaches moments where automation is not enough. Most are not designed to recognize them.

A renewal system sends automated pricing and contract updates to a key account. Three months earlier, the account's main champion left the company. No one flagged it. The system had no signal for relationship risk, and the person who could have caught it wasn't in the loop. By the time a human steps in, the conversation has already started with someone who wasn't part of the original decision and has no reason to stay.

The discipline is anticipating where those moments are — defining the signals, the handoff, the context that transfers — so that human judgment arrives when it can still change the outcome.

WHAT THIS TESTS: Is escalation intentional — or reactive?

Have we defined the moment when the system should step back or hand off?

Can we name the specific signals that trigger escalation?

What context transfers when escalation happens — and what doesn't?

Does escalation occur before the critical moment — or after?

FAIL SIGNAL: Escalation only happens after the person has already failed.

PRINCIPLE 5Measure what actually matters

Every system improves the metrics it is measured against. When those metrics are limited to speed, throughput, and cost, the system gets faster and cheaper while the experience continues to fail the people it was built for.

A contact center reduces handle time and improves first contact resolution rates. The metrics move in the right direction. Meanwhile, customers who didn't call back — not because their problem was solved, but because they gave up — don't appear in any report. The ones who quietly moved to a competitor don't either. The dashboard looks good. The relationship is eroding.

The measures that matter are further downstream — resolution, retention, growth, and the trust that determines whether a customer, patient, or partner stays. Most organizations track what is easy to count. Few connect those numbers to the outcomes that actually drive business performance. That connection has to be designed in — before you build, not after the metrics disappoint.

WHAT THIS TESTS: Are we measuring the system — or the outcome?

Have we defined what winning looks like for the person — before we defined what winning looks like for the system?

Are our measures connected to resolution, trust, and retention — or just speed and throughput?

Would we still ship this if it improved efficiency but reduced trust?

Where in this system are we measuring activity rather than resolution?

FAIL SIGNAL: Metrics improve while experience degrades.

The cost of not doing this work is not a single failure. It is a pattern. Every principle left unapplied becomes a decision the system makes by default — about who gets served well and who doesn't, about where ownership disappears, about when a person is left to absorb what the system couldn't resolve. Those defaults compound quietly. They show up first in repeat contacts and unresolved outcomes. Then in trust that erodes without a visible moment of failure. Then in customers, patients, and partners who quietly choose someone else. The ones that don't do this work will keep investing in the system while the experience continues to fail the people it was built for.

THE IMPERATIVEThe five questions that will tell you where to start

If you run nothing else, ask these. One from each principle. The answer that makes the room go quiet is where the work begins.

Is there context the person has that the system is acting as if it doesn't need?

Have we defined where the system should adapt or stop — or are we waiting for failure to define it for us?

Can we name the exact point where ownership breaks?

Does escalation occur before the critical moment — or after?

Would we still ship this if it improved efficiency but reduced trust?

The questions above are not for a workshop or an offsite. They belong in the work already in progress — in the review that is already scheduled, the decision that is already being made, the system that is already being built. Any team member can ask them. Any leader can make it safe to do so. That is the only change required to begin.

The leaders who get this right don't wait. They change how the work gets done — with the team already in the room, in the next review, in the next working session. That is where the work begins.